Artificial intelligence is transforming how businesses operate in 2026. From AI chatbots and content generators to automation systems and SaaS tools, Large Language Models (LLMs) are becoming essential for digital growth. Instead of relying completely on third-party AI APIs that charge per request, many startups and developers are now choosing VPS hosting for AI projects to build their own AI infrastructure. Deploying open-source LLM models on a VPS allows businesses to reduce long-term costs, improve performance, and maintain full control over their data. If you are planning to launch an AI tool, chatbot, or SaaS platform, understanding how to deploy open-source LLM models on VPS is a strategic advantage. With the right high-performance VPS hosting, you can run powerful AI applications without paying recurring API usage fees.

Why Choose VPS Hosting for AI Models

When hosting AI applications, infrastructure matters. Shared hosting environments do not provide the dedicated resources required for AI workloads. LLMs demand high RAM, strong CPU performance, and fast storage. This is why businesses prefer reliable VPS hosting for AI models. Using affordable VPS hosting for AI applications ensures dedicated server resources, better uptime, and improved response time. Unlike API-based services that increase costs as usage grows, self-hosted LLMs provide predictable monthly expenses. For startups building AI SaaS platforms or agencies offering AI automation services, choosing the best VPS for AI hosting significantly improves profitability and scalability.

Understanding Server Requirements for LLM Deployment

Before deploying an open-source LLM, it is essential to understand the server requirements for the deployment of the LLM, as large language models are memory-intensive models that require high RAM and processing power for smooth functioning. Small models can function with high RAM VPS hosting, while large models require GPU VPS hosting for smooth functioning. Choosing scalable VPS hosting is essential to ensure the growth of the AI infrastructure with the increasing number of users. Businesses that need to cater to the population of India can benefit from choosing affordable VPS hosting in India for faster AI response times, thereby avoiding the need to invest in the right infrastructure at a later stage.

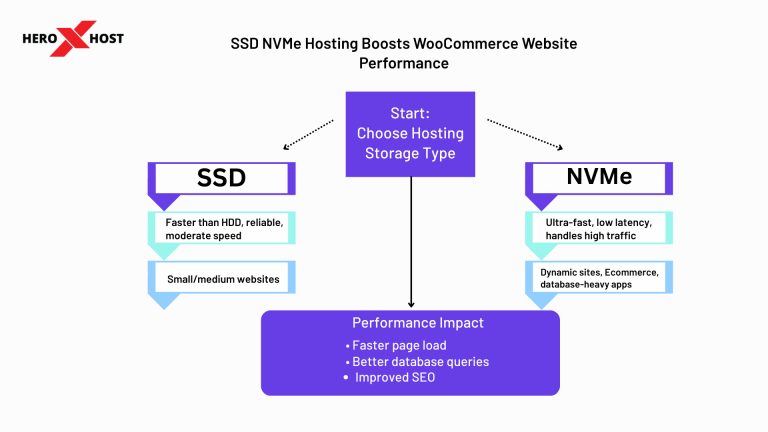

Selecting the Right VPS Hosting Plan

Choosing the correct VPS server for AI hosting is one of the most critical steps in deployment. Not all VPS plans are designed for resource-heavy applications like LLMs. You should prioritize high RAM allocation, fast SSD or NVMe storage, scalable CPU cores, and strong uptime guarantees. High-performance VPS hosting ensures faster model loading and better response times. Businesses that expect growth should choose scalable cloud VPS hosting, allowing easy upgrades without downtime. Making the right hosting decision directly impacts the speed, reliability, and overall user experience of your AI application.

Preparing Your VPS Environment for AI Deployment

Once your VPS hosting plan is active, preparing the environment properly ensures smooth deployment. A stable operating system setup allows AI frameworks and libraries to function efficiently. Proper server configuration reduces compatibility issues and prevents unnecessary performance errors. A well-prepared VPS server for AI hosting improves stability and ensures that your model can handle real-time user requests without crashing. Businesses that invest in reliable VPS hosting experience better uptime and consistent performance compared to underpowered hosting environments.

Deploying an Open-Source LLM Model

After preparing your server, the next step is to choose and deploy an open-source LLM. There are many LLMs that are available today, which are capable of handling conversational AI, summarization, automation, etc. However, it should be noted that running large models without optimization results in high memory consumption. Optimization techniques also help in reducing memory consumption, which makes it possible to run AI models even on affordable VPS hosting plans. This helps small businesses to run AI tools without investing in extremely expensive hardware solutions. This makes it possible for small businesses to run AI tools without investing in extremely expensive hardware solutions.

Integrating the LLM with Your Application

However, this deployment of the model is just a part of the entire process. To use this model for websites, SaaS solutions, and automation tools, businesses have to integrate this model with their interface. Having your own AI system hosted on a secure VPS server gives you the advantage of full customization and traffic control. The hosting of an AI chatbot, AI content platforms, and automation tools can be greatly benefited by having full ownership of the infrastructure. Having VPS hosting for your AI system gives you the assurance that your AI system will always be available and responsive, even during peak traffic. This is especially true for revenue-generating AI systems.

Securing Your AI VPS Server

Security plays a crucial role in deploying open-source LLM models on VPS. Since AI applications may process sensitive user data, protecting your server environment is essential. Secure VPS hosting includes proper firewall configuration, encrypted connections, and access control measures. Businesses handling customer conversations or internal business automation should always prioritize secure VPS hosting with monitoring capabilities. A protected environment builds user trust and prevents data breaches that could damage brand reputation.

Monitoring Performance and Scaling AI Infrastructure

After deployment, continuous monitoring ensures long-term success. AI models dynamically consume server resources depending on traffic and request complexity. Tracking server performance helps prevent slow response times and unexpected downtime.

If your AI SaaS platform or chatbot gains traction, upgrading to a higher RAM VPS or GPU VPS hosting plan ensures seamless scalability. Choosing scalable VPS hosting from the beginning allows businesses to expand without migration challenges. Scalability is one of the biggest advantages of deploying AI models on VPS instead of relying entirely on third-party APIs.

VPS Hosting vs API-Based AI Services

Many businesses debate whether to self-host LLMs or use API-based AI services. API solutions offer quick setup but come with usage-based billing and limited customization. As traffic grows, costs can increase rapidly. On the other hand, self-hosting open-source LLM models on high-performance VPS hosting requires initial setup effort but offers predictable monthly expenses and full control. For AI startups, SaaS founders, and agencies planning long-term growth, deploying LLMs on VPS hosting often provides better financial and operational flexibility.

Conclusion: Is Deploying Open-Source LLMs on VPS Worth It?

Deploying open-source LLM models on VPS in 2026 is no longer limited to large enterprises. With affordable VPS hosting options and powerful open-source models available, businesses of all sizes can build their own AI infrastructure. By selecting high-performance and scalable VPS hosting, optimizing model performance, securing the environment, and planning for future growth, you can successfully launch AI chatbots, automation tools, and SaaS AI platforms. Businesses that invest in reliable VPS hosting for AI applications gain cost efficiency, data privacy, and long-term scalability. Controlling your own AI infrastructure is not just a technical decision—it is a strategic move for sustainable digital growth.

Frequently Asked Questions

1. Can open-source LLMs run on affordable VPS hosting?

Ans. Yes, optimized smaller models can run efficiently on high-RAM VPS hosting, making AI deployment accessible for startups and small businesses.

2. Do I need GPU VPS hosting for AI models?

Ans. GPU VPS hosting significantly improves speed and performance for larger models, but smaller models can operate on high-performance CPU VPS hosting.

3. Why is VPS hosting better than shared hosting for AI deployment?

Ans. VPS hosting provides dedicated RAM and CPU resources required for AI workloads, while shared hosting lacks the stability needed for resource-intensive applications.

4. How much does it cost to deploy LLM models on VPS?

Ans. Costs depend on server configuration. Budget VPS hosting plans can support smaller models, while larger models may require high RAM or GPU VPS hosting plans.

5. Is self-hosted AI more secure than API-based AI?

Ans. When deployed on secure VPS hosting with proper protection measures, self-hosted AI offers greater data privacy and infrastructure control.