Artificial intelligence has transitioned from being a mere experimental project in research facilities to becoming a critical framework. Between the years 2026 and 2028, AI is expected to evolve from a tool for gaining a competitive edge to an essential operational component. Organizations will shift their focus from questioning the adoption of AI to evaluating their systems’ capability to support it. Hosting will be fundamental to this evolution.

The nature of AI workloads is significantly distinct from conventional web applications. These workloads demand greater memory, require steady CPU or GPU efficacy, need low latency, and can cause unpredictable surges in traffic. For organizations implementing tools powered by large language models, AI agents, automation systems, and SaaS solutions, infrastructure development has transitioned from a secondary consideration to a key growth initiative. Those businesses that enhance their virtual private servers and hosting frameworks now will be positioned for effective scaling. In contrast, those who postpone will face challenges linked to performance limitations, increasing expenses, and security vulnerabilities.

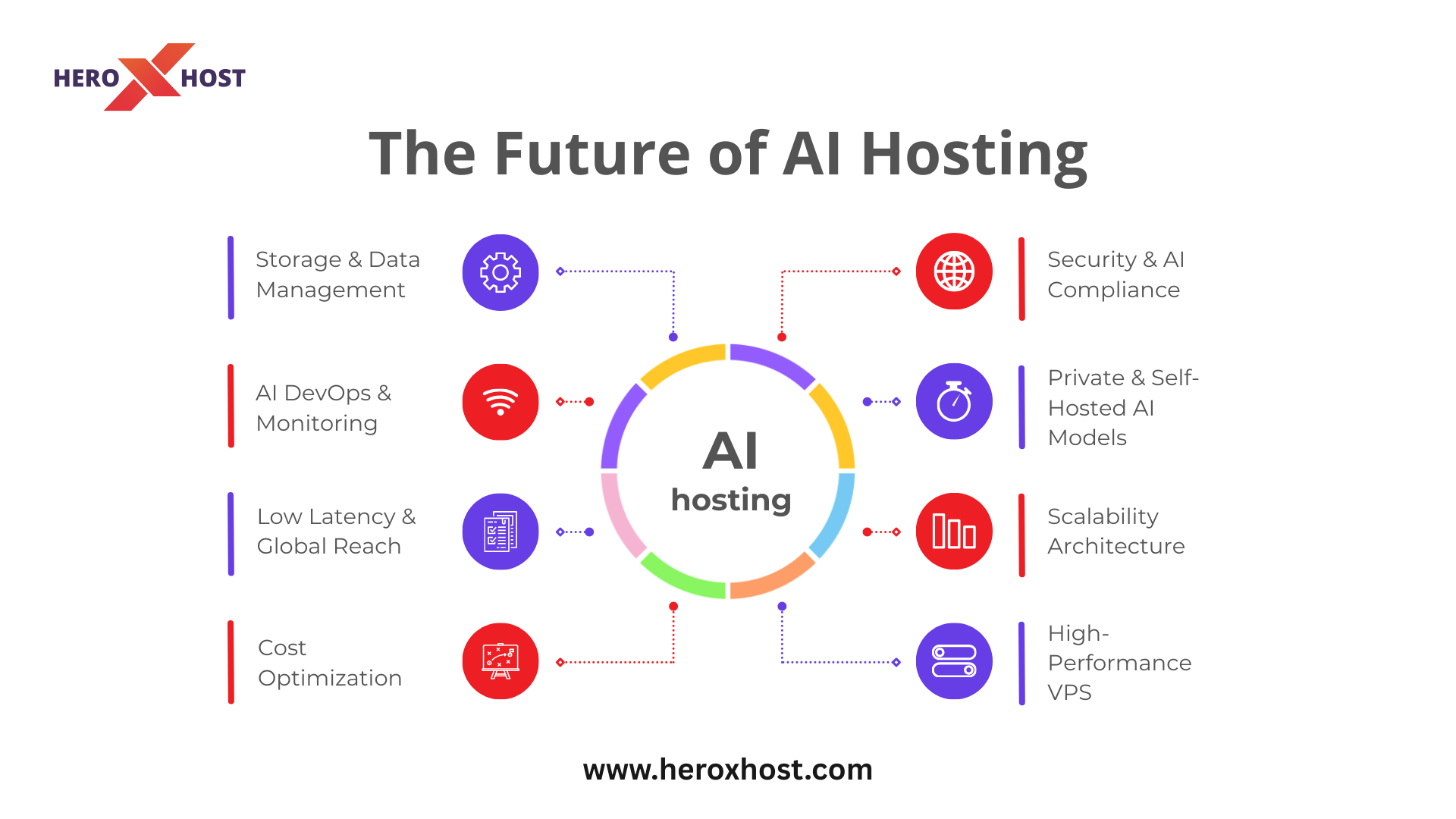

The landscape of AI hosting in the future will encompass more than just improved server speed. It will involve aspects such as scalability, oversight, adherence to regulations, optimization, and financial management. Over the next three years, a variety of private LLM implementations and edge inference frameworks will transform how organizations approach VPS setups and AI infrastructure strategies.

AI Workloads Will Demand Infrastructure-Level Thinking

Traditional hosting was built for predictable web traffic. A website receives visitors, serves content, and processes basic database queries. AI applications are entirely different. A single inference request to a large language model can consume gigabytes of RAM and push CPU cores to sustained utilization. When businesses deploy AI chatbots, recommendation engines, document analyzers, or AI agents, the hosting layer becomes the engine room of innovation.

Between the years 2026 and 2028, artificial intelligence applications will transition from basic integrations to essential systems for operations. Customer service will depend on AI assistants. Internal operational processes will be streamlined through AI technologies. Software as a Service platforms will incorporate advanced decision-making systems into their fundamental features. As these technologies expand, organizations will require VPS environments tailored for continuous AI functions instead of just sporadic demands.

This indicates that organizations need to reconsider how they allocate resources. Enhanced RAM options, NVMe storage solutions, dedicated processing cores, and improved network speed will be regarded as standard necessities instead of luxury enhancements. Companies that view VPS as a crucial aspect of AI infrastructure instead of just regular hosting will be in a stronger position to manage expansion without the need for abrupt structural changes.

The Rise of Private and Self-Hosted AI Models

In the initial surge of artificial intelligence, numerous companies depended significantly on the services of external APIs. Although this method is efficient, it leads to reliance, varied pricing, and issues related to compliance. As we approach the years 2026 to 2028, an increasing number of organizations will turn towards self-managed and open-source LLM implementations on VPS servers.

Implementing AI models in VPS settings allows companies to have greater authority. Authority regarding data security. Authority concerning response times. Authority over expenses. Rather than incurring costs per token with external APIs, businesses can implement tailored models on flexible VPS setups, offering a clearer projection of infrastructure costs. This aspect becomes particularly critical for SaaS companies managing millions of AI inquiries every month.

Furthermore, global regulatory standards regarding AI and data security are becoming more stringent. Organizations that manage critical customer information will likely lean towards private LLM setups on secure VPS platforms. Running AI systems in-house diminishes risk and ensures preparedness for compliance. The upcoming landscape is set for organizations that establish private, controlled AI environments instead of being entirely dependent on outside sources.

Performance Optimization Will Define Competitive Advantage

Speed has always mattered online. With AI applications, speed becomes even more critical. Latency directly impacts user experience. An AI chatbot that responds in one second feels intelligent. One that responds in six seconds feels broken. Between 2026 and 2028, performance optimization at the VPS level will define customer satisfaction.

AI hosting performance depends on multiple factors working together. CPU allocation must prevent noisy-neighbor interference. RAM must be sufficient to prevent swapping during inference. NVMe storage reduces model loading time. Network optimization ensures fast API responses. Businesses will need VPS providers capable of delivering stable, isolated resources rather than oversold environments.

Beyond raw hardware, optimization techniques such as model quantization, batching, caching, and load balancing will become mainstream. Companies that understand how to optimize their VPS infrastructure for AI workloads will reduce operational costs while delivering superior performance. In a market where AI features become standard, performance becomes the differentiator.

Scalability Will No Longer Be Optional

AI adoption rarely grows slowly. When a feature resonates with users, demand spikes rapidly. A chatbot deployed to assist 500 users today may serve 50,000 next quarter. Infrastructure that cannot scale elastically will break under pressure.

Between 2026 and 2028, businesses must design AI hosting architecture with horizontal and vertical scalability in mind. Vertical scaling allows upgrading VPS resources such as RAM and CPU quickly. Horizontal scaling allows distributing workloads across multiple VPS instances. Both strategies must be built into the architecture from the beginning.

Scalability planning also includes redundancy and failover systems. AI-powered SaaS platforms cannot afford downtime. Customers expect continuous availability. Businesses must prepare multi-VPS architectures, load balancers, and backup strategies that protect AI services from disruption. AI Hosting will move from reactive upgrades to proactive capacity planning.

Security and AI Compliance Will Intensify

As artificial intelligence becomes increasingly integrated into various functions, the vulnerabilities associated with it grow. AI systems can be undermined through techniques such as prompt injection, data poisoning, and unauthorized entry. Virtual private server environments that run AI applications need to be strengthened to combat new risks.

From 2026 to 2028, securing AI will encompass more than just firewalls and SSL certificates. It will necessitate robust access controls, data encryption both when stored and during transmission, management of model access, effective logging, and systems for detecting anomalies. Organizations running AI models on virtual private servers should guarantee adequate separation between their services and safeguard training data from being compromised.

Regulatory frameworks will also become increasingly stringent. Authorities are beginning to implement regulations that specifically address AI concerning transparency, fairness, and accountability. The environments Ai hosting these systems must facilitate thorough logging, auditing, and secure data retention methodologies. Businesses that take the initiative to establish a secure AI infrastructure based on virtual private servers will mitigate legal exposure and foster trust among their clientele.

Cost Discipline Will Separate Winners from Burners

AI infrastructure can become expensive quickly. Businesses experimenting without structured hosting strategies often face unexpected cloud bills. Between 2026 and 2028, cost optimization will become central to AI hosting decisions.

VPS environments offer a predictable and scalable cost structure compared to large hyperscale cloud environments. Businesses can configure dedicated resources tailored to their workload rather than paying for auto-scaling unpredictability. By optimizing model size, inference batching, and resource allocation, companies can significantly reduce operational expenses.

Cost discipline also involves infrastructure efficiency. Running oversized models on underutilized servers wastes capital. Conversely, under-provisioned VPS environments cause performance degradation. Strategic planning and monitoring ensure that AI workloads operate at peak efficiency without unnecessary overhead.

Edge AI and Distributed Inference Will Expand

AI applications are moving closer to users. Real-time analytics, autonomous systems, IoT integrations, and region-specific AI services require lower latency than centralized servers can always provide. Between 2026 and 2028, edge AI deployments will grow significantly.

Businesses may deploy region-based VPS instances to reduce response times for global users. Distributed inference systems will allow AI requests to be processed closer to end-users rather than routed through distant data centers. This reduces latency and enhances user experience.

Edge-ready VPS infrastructure will play a major role in enabling this transition. Companies that architect geographically distributed AI hosting strategies will serve international markets more effectively while maintaining performance consistency.

AI DevOps Will Become Standard Practice

Managing AI infrastructure requires more than traditional DevOps. Model updates, version control, deployment pipelines, monitoring, and rollback mechanisms must be integrated into VPS-based environments. Between 2026 and 2028, AI DevOps practices will become standardized.

Businesses will adopt automated deployment pipelines for AI models. Continuous monitoring systems will track CPU utilization, memory usage, latency, and request throughput. Alerts will detect anomalies before they impact users. VPS hosting environments must support these advanced monitoring tools.

The organizations that treat AI infrastructure as a living system rather than a static deployment will maintain stability and innovation simultaneously. AI DevOps will ensure consistent performance while allowing rapid experimentation.

Why Businesses Must Act Now

The future of AI hosting is not theoretical. It is unfolding now. Businesses that wait until their AI systems outgrow their hosting infrastructure will face expensive migrations and service disruptions. Preparing between 2026 and 2028 means designing VPS architecture that anticipates growth rather than reacts to it.

Strategic preparation involves auditing current workloads, forecasting AI adoption, selecting scalable VPS configurations, implementing monitoring systems, and building security-first architecture. AI will only become more integrated into operations. Hosting must evolve in parallel.

The companies that invest early in AI-ready VPS infrastructure will move faster, scale smoother, and operate more efficiently than competitors who treat hosting as an afterthought.

Why Heroxhost Is Built for High-Performance AI VPS Hosting

As AI applications grow more demanding between 2026 and 2028, infrastructure is no longer just a backend utility—it becomes the foundation of innovation. This is where Heroxhost positions itself differently. High-performance VPS at Heroxhost is not designed merely for hosting websites; it is engineered to handle resource-intensive workloads such as LLM inference, AI APIs, SaaS automation engines, and intelligent chat systems that require sustained CPU power, high RAM allocation, and ultra-fast storage performance.

AI workloads are unforgiving. When a model processes user queries, generates responses, or runs data analysis pipelines, every millisecond matters. Heroxhost leverages NVMe SSD storage for faster model loading times, reduced latency, and improved I/O performance, which is critical when AI applications constantly read and write data. Combined with dedicated resources and stable virtualization environments, this ensures that your VPS performance remains consistent even during peak traffic or heavy inference cycles.

High performance is not only about speed; it is about stability under pressure. Many AI-driven businesses experience sudden growth spurts when a feature gains traction. Heroxhost’s scalable VPS architecture allows seamless vertical upgrades, enabling businesses to expand RAM, CPU cores, and storage without complex migrations. This flexibility ensures that AI platforms can scale confidently without service interruptions or costly infrastructure overhauls.

Security is equally important when hosting AI applications that process user data, proprietary datasets, or internal business intelligence. Heroxhost integrates strong security layers, including advanced firewalls, SSL support, and proactive monitoring systems, helping protect AI environments from unauthorized access or emerging threats. When combined with 24/7 technical support, businesses gain not just a VPS server, but a reliable infrastructure partner ready to support long-term AI growth.

For startups building their first AI SaaS product or enterprises deploying private LLM systems, Heroxhost high-performance VPS solutions provide the balance of speed, control, scalability, and cost predictability required in modern AI hosting. In a future where AI becomes central to business operations, choosing the right VPS infrastructure is not optional—it is strategic.

Conclusion

The period between 2026 and 2028 will redefine AI hosting standards. Infrastructure will become the silent backbone of intelligent systems powering SaaS platforms, automation tools, AI agents, and enterprise workflows. Businesses must prepare for increased resource demands, scalability challenges, security complexities, and regulatory scrutiny.

VPS hosting will play a central role in this transformation. It offers the control, predictability, and scalability required to host AI workloads effectively. Companies that proactively design optimized VPS environments for AI will gain performance advantages, cost efficiency, and operational resilience.

The future of AI is not just about smarter models. It is about smarter infrastructure decisions. Choose Heroxhost today that provides the Fastest web hosting in india ,The businesses that align their hosting strategy with AI growth today will lead tomorrow.

Frequently Asked Questions (FAQs)

1. Why is VPS hosting important for AI applications between 2026 and 2028?

VPS hosting provides dedicated resources, predictable performance, and scalability, which are critical for handling AI workloads that demand high RAM, CPU stability, and low latency.

2. Can businesses rely only on third-party AI APIs in the future?

While APIs are convenient, many businesses are shifting toward self-hosted AI models on VPS servers to gain cost control, data privacy, and performance optimization.

3. What VPS configuration is ideal for AI workloads?

AI applications typically require high RAM, NVMe storage, dedicated CPU cores, and optimized network bandwidth. The exact configuration depends on model size and request volume.

4. How can companies reduce AI hosting costs?

Cost optimization can be achieved through right-sized VPS configurations, model quantization, efficient batching, and continuous monitoring of resource usage.

5. Will AI hosting require new security practices?

Yes. AI introduces unique risks such as data leakage and prompt injection. Businesses must implement strong encryption, access controls, monitoring, and compliance frameworks.

6. Is scalability really that critical for AI applications?

Absolutely. AI adoption can grow rapidly, and infrastructure that cannot scale smoothly can cause downtime and performance issues.